Teams are shipping AI features at a rate the industry hasn't seen before. Prospect scoring. Smart replies. Automated summaries. Predictive prioritisation. The technology is genuinely impressive.

And then nobody uses it.

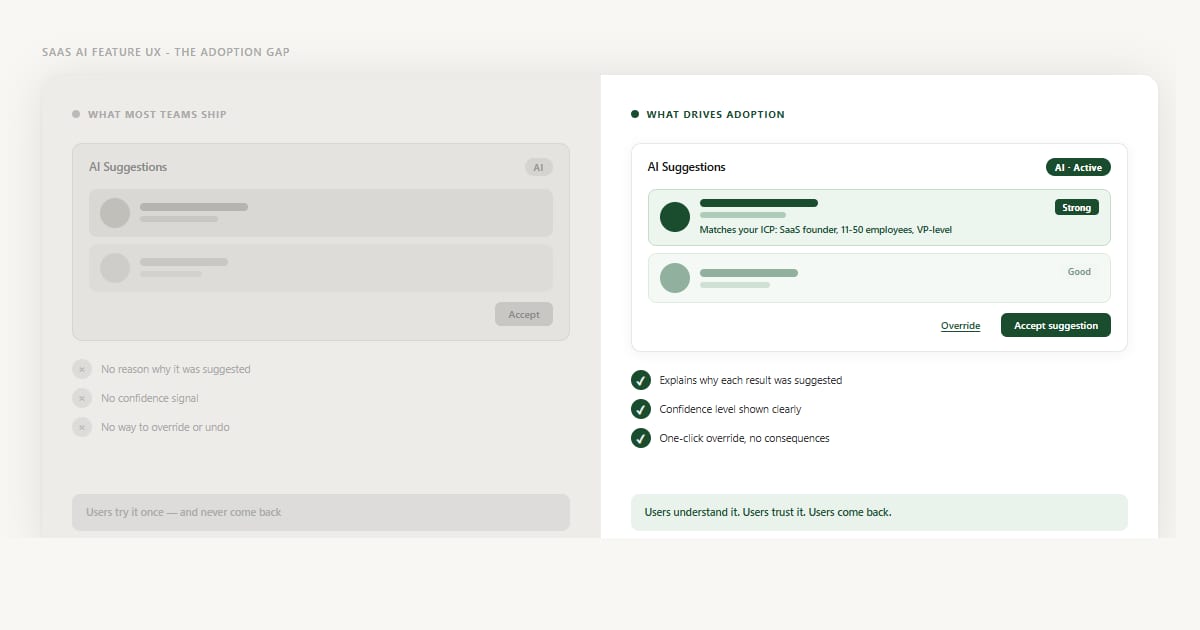

Research consistently shows that the majority of AI features in SaaS products see fewer than one in four users engaging with them regularly - not because the models fail, but because the experience around the model gives users no reason to trust it. The AI works. The UX doesn't.

The Gap Is Not About Capability

When teams investigate low AI adoption, the first instinct is to look at the model. Is the accuracy high enough? Are the suggestions relevant? Should we retrain on more data?

These are reasonable questions - but they're almost never where the problem lives. Users don't abandon AI features because they fire a wrong suggestion occasionally. Users abandon AI features because they have no way to judge whether a suggestion is right or wrong. When you can't evaluate a recommendation, the rational move is to ignore it.

This is the adoption gap: a distance between what the AI can do and what users feel safe acting on. It's a design problem, and it has three root causes that appear in almost every low-adoption AI feature I've reviewed.

Three UX Failures That Kill AI Adoption

1. Black-box suggestions with no reasoning

A suggestion appears. There is a name, a score, a button. No explanation of why this was surfaced. No criteria you can inspect. No signal that distinguishes a strong match from a weak one. The user is being asked to act on pure output with no access to input.

This feels fine in a product demo. In daily use, it trains users to distrust every suggestion - because once one recommendation turns out to be wrong and there's no way to understand why, the whole system becomes suspect. Teams spend months improving model accuracy and see no lift in adoption because the problem was never the accuracy. It was the opacity.

2. All suggestions look equally confident

A strong signal and a weak signal are displayed the same way. Same card. Same layout. Same button. No differentiation. So users either apply the same scepticism to everything - ignoring the good suggestions along with the weak ones - or they accept everything uncritically, which leads to bad outcomes that erode trust even faster.

Confidence is information. When you hide it, you're asking users to do work the model has already done. Surfacing confidence levels - clearly, in plain language - lets users allocate their attention where it matters. A list of AI suggestions where "Strong match" items are visually distinct from "Worth reviewing" items is a tool. A flat list of identical cards is noise.

3. Override feels risky or permanent

Even when users distrust a suggestion, many accept it anyway - because declining feels uncertain. Will it affect their account? Will the AI stop learning from them? Will this dismiss a future recommendation too? When the cost of disagreeing with the AI is unclear, users default to compliance, not because they think the AI is right but because the alternative feels unpredictable.

This is the most underestimated failure mode in AI UX. Users don't need to agree with every suggestion. They need to know that disagreeing is safe, easy, and consequence-free. The absence of a clear override path doesn't just frustrate users - it traps them.